Measuring Human Performance on ARC-AGI-3

AGI is here when a system can learn like a human.

However there is still a gap between what humans can learn and what AI can learn. ARC Prize Foundation exists to understand this gap. The ARC-AGI benchmarks are our tools for measuring it.

Using these tools requires understanding human performance. How do real people, our only proof point of general intelligence, actually learn and solve novel problems?

Today we’re releasing the human dataset for ARC-AGI-3 - a controlled study of 458 participants and the most exhaustive human testing study in the ARC-AGI series to date.

We do not yet have AGI. This dataset is the receipt.

ARC-AGI-3

ARC-AGI-3 is a series of 135 abstract reasoning environments. Play them yourself.

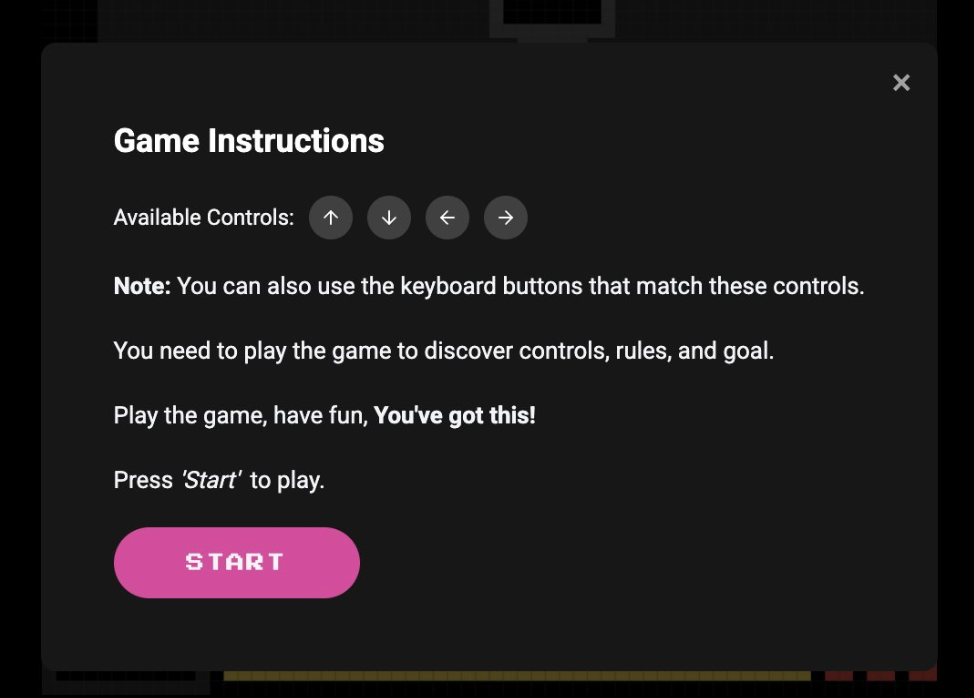

The test taker, whether human or AI, is not given instructions on how to play. They must explore, infer the rules that govern the environment, and come up with a strategy on their own.

A key design constraint of ARC-AGI-3 is that every environment must be solvable by humans with no prior training. To ensure this, we conducted the largest formal study on ARC-AGI human performance ever done.

To dive deeper into ARC-AGI-3, check out the benchmark, play the environments, or watch our launch video.

Human Baselines on ARC-AGI-3

To gather human baselines we tested members of the general population. Participants included various levels of education, income, job sectors, and ages. We did not control for one particular demographic.

We held weekly, in-person, focus groups in a San Francisco-based testing center. No references to ARC Prize Foundation or AI testing were made at any time.

Each testing session lasted 90 minutes. Every participant received a base payment of ~$130, plus an extra $5 for each environment they successfully solved.

Tests were held under "first-run" conditions. This means every participant only saw environments once and had a single attempt to beat it. This measures the ability to learn and adapt to a novel problem, not the ability to repeat a previously learned solution.

Humans were given the same prior information and affordances that AI is given:

- Both humans and AI get the same "system prompt”

- Test takers are told available environment actions

- Neither are told that this was an ARC Prize or ARC-AGI test

Humans and AI received identical information. Neither had an informational advantage.

As testing progressed, the data we collect shows us what intelligence looks like in practice.

If an environment is too difficult, it was excluded from the dataset or revised.

The end result is a dataset that demonstrates human solvability of 100% of environments. Every environment is beaten by at least two independent participants and most are beaten by many more.

ARC-AGI-3 Human Testing Dataset

We're open sourcing the full Public Demo dataset, which includes 342 human step-by-step replays for our 25 public environments. With it researchers can analyze:

- Per-environment solvability

- Action counts and efficiency distributions

- Full replay data (step-by-step human interactions)

Solvability

The most basic measure of human performance is solvability: can humans complete these environments? To answer that, we look at completion rates.

Our early findings:

- Not all environments are solved equally - 10 out of 10 participants solved r11l, whereas only 6 out of 12 solved tr87

- Per-level data reveals where players get stuck - in cd82, 2 of 11 players couldn't get past level 2, but most who did went on to solve the entire environment. This suggests a steep onboarding curve rather than overall difficulty

The table below summarizes each of the 25 public demo environments, including how many participants attempted each environment, how many solved it, and links to the top 10 replays (scores = levels completed).

| Env. | Plays | Solves | Top 10 Replays (score) |

|---|---|---|---|

| ar25 | 10 | 5 | 8, 8, 8, 8, 8, 7, 6, 6, 5, 0 |

| bp35 | 14 | 2 | 9, 9, 8, 8, 8, 8, 7, 7, 7, 6 |

| cd82 | 11 | 8 | 6, 6, 6, 6, 6, 6, 6, 6, 2, 2 |

| cn04 | 12 | 6 | 6, 6, 6, 6, 6, 6, 3, 3, 2, 1 |

| dc22 | 11 | 4 | 6, 6, 6, 6, 5, 5, 4, 4, 4, 3 |

| ft09 | 10 | 4 | 6, 6, 6, 6, 3, 2, 2, 1, 0, 0 |

| g50t | 14 | 3 | 7, 7, 7, 5, 3, 3, 2, 2, 2, 2 |

| ka59 | 10 | 3 | 7, 7, 7, 6, 5, 1, 1, 0, 0, 0 |

| lf52 | 11 | 4 | 10, 10, 10, 10, 9, 9, 9, 9, 1, 1 |

| lp85 | 54 | 15 | 8, 8, 8, 8, 8, 8, 8, 8, 8, 8 |

| ls20 | 13 | 6 | 7, 7, 7, 7, 7, 7, 5, 5, 2, 1 |

| m0r0 | 11 | 7 | 6, 6, 6, 6, 6, 6, 6, 5, 4, 1 |

| r11l | 10 | 10 | 6, 6, 6, 6, 6, 6, 6, 6, 6, 6 |

| Env. | Plays | Solves | Top 10 Replays (score) |

|---|---|---|---|

| re86 | 11 | 5 | 8, 8, 8, 8, 8, 6, 6, 6, 2, 2 |

| s5i5 | 11 | 4 | 8, 8, 8, 8, 7, 4, 4, 2, 2, 2 |

| sb26 | 12 | 5 | 8, 8, 8, 8, 8, 7, 6, 4, 4, 4 |

| sc25 | 15 | 10 | 6, 6, 6, 6, 6, 6, 6, 6, 6, 6 |

| sk48 | 14 | 7 | 8, 8, 8, 8, 8, 8, 8, 7, 4, 4 |

| sp80 | 12 | 2 | 6, 6, 5, 4, 4, 2, 2, 1, 1, 1 |

| su15 | 13 | 3 | 9, 9, 9, 4, 4, 4, 3, 3, 1, 1 |

| tn36 | 14 | 6 | 7, 7, 7, 7, 7, 7, 6, 6, 6, 1 |

| tr87 | 12 | 6 | 6, 6, 6, 6, 6, 6, 5, 5, 5, 4 |

| tu93 | 13 | 9 | 9, 9, 9, 9, 9, 9, 9, 9, 9, 4 |

| vc33 | 10 | 6 | 7, 7, 7, 7, 7, 7, 6, 3, 2, 0 |

| wa30 | 14 | 5 | 9, 9, 9, 9, 9, 8, 8, 8, 6, 5 |

Efficiency

How humans beat the environments matters too. Efficiency measures not just whether a level is completed, but how many actions it took relative to the human baseline. This connects directly to the definition of intelligence, skill-acquisition efficiency.

ARC-AGI-3 offers the first formal measure of learning efficiency in the ARC-AGI series. The chart below shows the distribution of human per-level efficiency across the public demo set.

ARC-AGI-3 Scoring Updates

Since previewing ARC-AGI-3, nearly one million scorecards have been submitted on public environments. That real-world data helps us stress-test and harden our scoring approach.

What we’ve observed:

- "Luck factor": In at least one level of one game, the environment restricted determining the optimal path from the start. An early choice could lock a player out of the minimum action count regardless of how well they played afterward. Imperfect information isn't inherently a problem, it's a natural feature of many environments. However, it is an issue when the baseline is tight enough that one unlucky early choice dominates a player's score. Our original human baseline (2nd best human) forced luck into what should be a pure measure of reasoning efficiency.

- First place doesn’t always get 100%: We observed that test takers could beat the human baseline on every single level by a wide margin, but if they were subpar on just one level, the hard cap of 100% per level meant their overall score dropped below 100%. This did not accurately reflect the spirit of measuring a game's action efficiency.

Based on what we’ve observed, we’re announcing two updates to ARC-AGI-3 scoring:

- The per-level baseline is now less sensitive to outlier performances, reducing the impact of luck on individual levels.

A single unusually efficient human run no longer defines the baseline for ARC-AGI-3 scoring. Rather the baseline now reflects more typical human play. Technical change: the human baseline which normalizes scores moves from 2nd-best player to median player per level. - A single subpar level no longer disproportionately drags down an overall score

A test taker who generalizes well across an entire environment is no longer penalized by a single, sub-par, level. Technical change: per-level score cap increases from 100% to 115%.

The net result of these changes is a marginal increase in scores for both humans and AI (+0.5pp) and better reflects our desire to fairly compare efficiency between test taskers.

Our core claim for ARC-AGI-3 remains:

- ARC-AGI-3 is 100% solvable by humans. Every environment was beaten by at least two humans, typically five or more, out of a small uncontrolled panel of around ten members of the general public.

To see how this works in practice, here's the action progression chart for re86 from our 10 human testers.

When AI scores 100% on ARC-AGI-3 it means AI beat every level of every environment at or above the median human-baseline action efficiency.

To read more about our scoring, see our documentation.

Looking ahead

We believe our human study for ARC-AGI-3 is the largest of its kind for an AI benchmark and we’re proud to fully open source the dataset to support our mission.

Going forward, we’ll continue to develop benchmarks that are grounded the understanding of human performance.

We’ll continue releasing data, improving methodology, and refining scoring

If you’d like to join us on this journey, consider making a tax-deductible donation to ARC Prize Foundation or exploring roles on our team.