Research

Guide

The Official ARC-AGI Technical Guide

Which version of ARC-AGI are you working with?

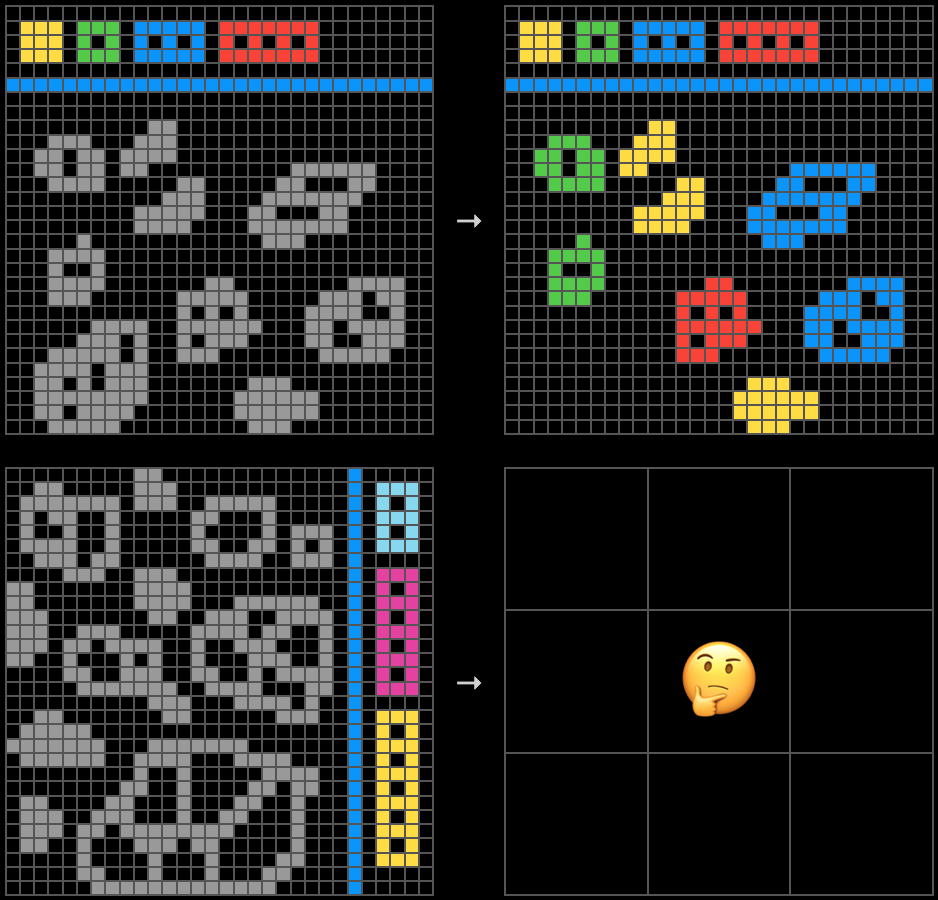

Static Reasoning

ARC-AGI-1 & ARC-AGI-2

Learn the task format, data structure, development tools, and solution approaches for the original ARC-AGI benchmark format.

Interactive Reasoning

ARC-AGI-3

The first interactive reasoning benchmark. Build AI agents that explore, plan, and adapt within novel game environments.